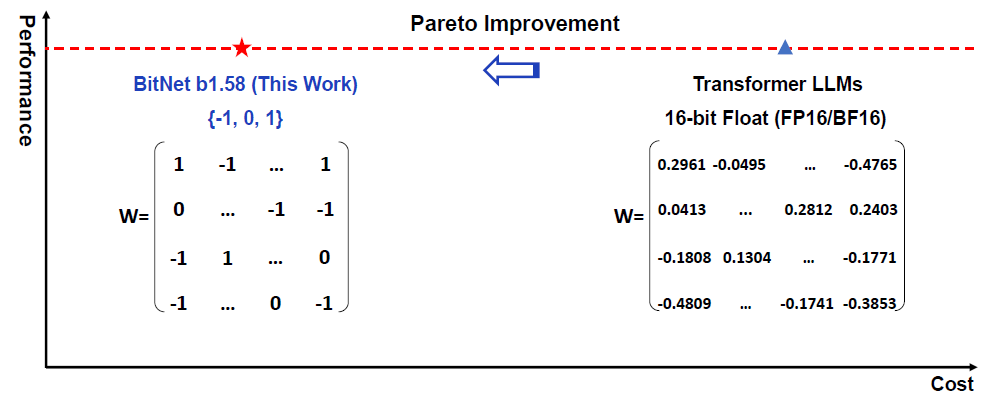

The Era of 1-bit LLMs: All Large Language Models are in 1.58 Bits

In this post we dive into the era of 1-bit LLMs paper by Microsoft, which shows a promising direction for low cost large language models

The Era of 1-bit LLMs: All Large Language Models are in 1.58 Bits Read More »