Consistency models are a new type of generative models, introduced by Open AI in a paper titled “Consistency Models”. In this post we explain why consistency models are interesting, what they are and how they are created. Let’s start by asking why should we care about consistency models?

Why Should We Care About Consistency Models?

Dominant image generation models, such as DALL-E 2, Imagen and Stable Diffusion, are Diffusion Models. These models are able to generate amazing results such as the avocado chair and the cute dog above. However, they have a drawback which consistency models can help to solve.

Diffusion Models Drawback

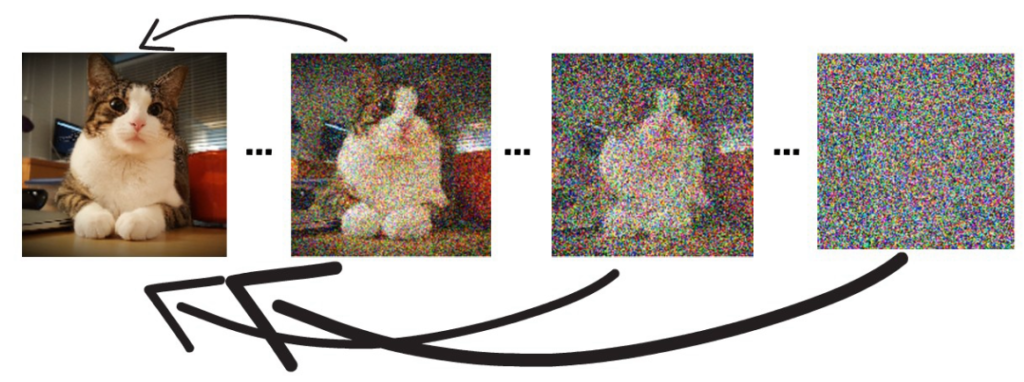

Diffusion models learn to gradually remove noise from an image in order to get a clear sample. The model starts with a random noise image, and in each step it removes some of the noise. Finally, we get a nice clear image of a cat. The drawback is that to generate a clear image, we need to go through this gradual noise removal process. This process can take tens to thousands of steps, which can get quite slow. So for example, diffusion models are not a good fit for real time applications. Let’s move on to see how consistency models can help.

What Are Consistency Models?

Consistency Models are similar to Diffusion Models in the sense that they also learn to remove noise from an image. However, consistency models dramatically reduce the number of steps needed. The idea is to teach the model to map between any image on the same denoisening path to the clear image.

So, the name consistency models comes from the idea that the model learns to be consistent for producing the same clear image for any point on the same path.

With that capability, we can jump directly from a completely noisy image, to a clear image, skipping the slow process of diffusion models.

Another super powerful attribute is that it is possible to use few steps instead of one if higher quality image is needed, paying off with more compute.

The way it is being done, is not exactly as it is done with diffusion models where in order to get a better quality, we apply the model again over our current result image. Rather, we start with a noisy image, then we run our trained consistency model over the noisy image to get a clear image. Then we add a bit of random noise to it and get a noisy image again. We then repeat the process for as many iterations as we want and according to the consistency model research paper this should yield better results.

Methods of Creating Consistency Models

There are two possible ways to train a consistency model.

One way is distillation of a pre-trained diffusion model. With distillation, we transfer knowledge from a large pre-trained diffusion model (such as SD) into a new smaller consistency model. In general, distillation means transferring knowledge from a large model into a new lighter model.

The second method is to train a consistency model from scratch. The researchers were able to produce good with trained-from-scratch models as well.

Training Process

During the training process we follow the destruction path of clear images to a complete noise. The training process looks at pairs of points in this destruction path.

We feed the consistency model with each of the images from that pair, to restore the original cat image. The result for each is not identical to the other. The training process goal is to minimize that difference so when the model is applied on points in the same destruction path, they will produce the same clear cat image. The training process will stop once this metric is accurate to a satisfactory level.

References

- Paper – https://arxiv.org/abs/2303.01469

- Code – https://github.com/openai/consistency_models

- Join our newsletter to receive concise 1-minute read summaries for the papers we review – Newsletter

All credit for the research goes to the researchers who wrote the paper we covered in this post.